Perkins School for the Blind

Since before Helen Keller attended, Perkins has provided children and young adults who are blind or visually impaired with the skills, education, and confidence they need to realize their potential. Perkins partnered with Rightpoint to solve a real-world problem faced by millions of people.

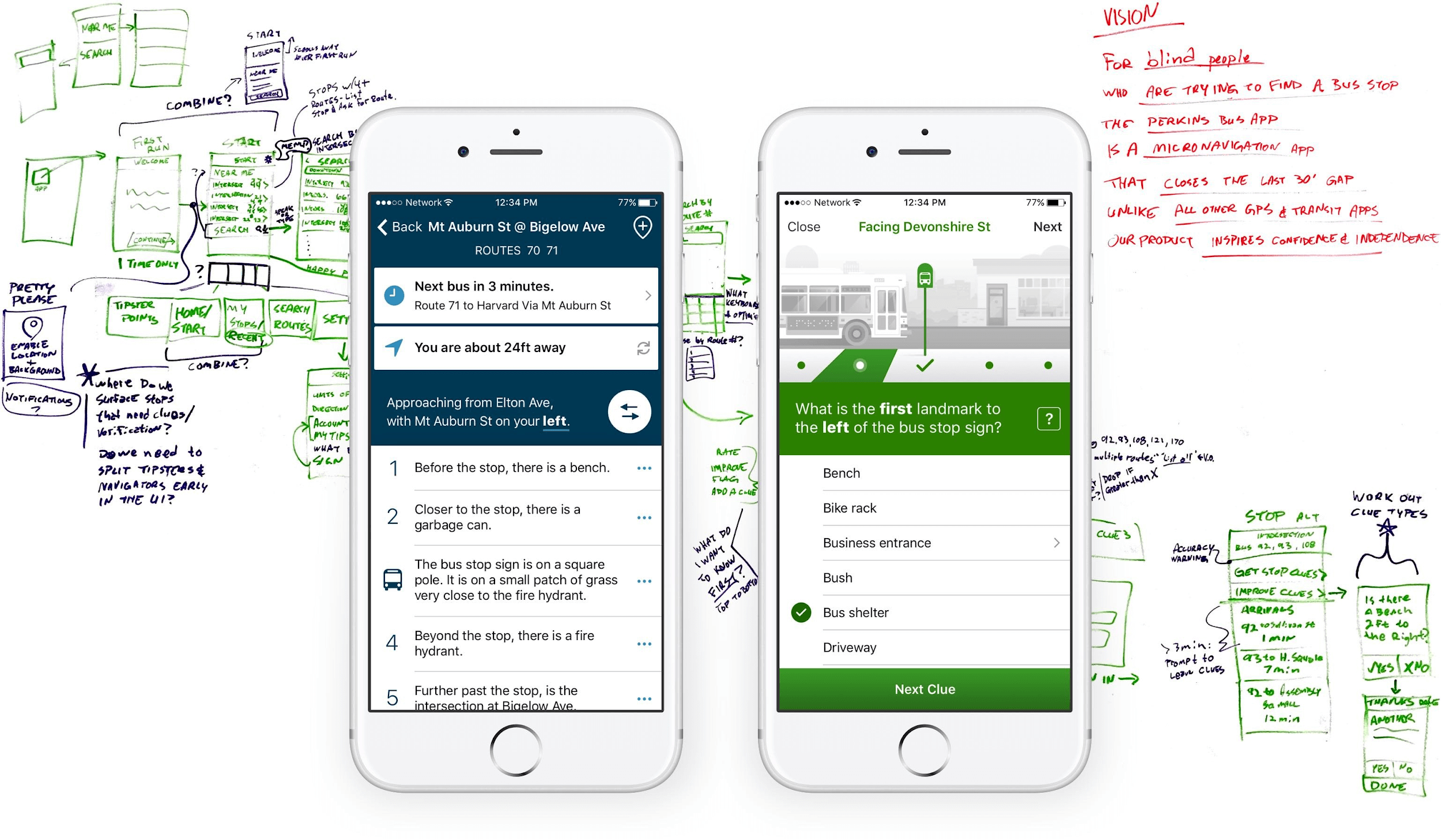

Help visually impaired commuters locate MBTA bus stops and travel with confidence and independence.

In today’s modern world, the iPhone is an indispensable tool to do just that, using native accessibility features like Dynamic Type and VoiceOver screen reading. Specialized apps provide voice prompts to help navigate the city. But even in this age of persistent GPS, directions often fall short, guiding commuters to within 30 feet of a bus stop. Imagine waiting for a bus, only to have it zoom by because you were standing at the wrong signpost.

Google awarded Perkins School for the Blind a grant to develop a solution for this 30 Foot Problem using crowdsourced data. Our challenge was to close the GPS gap, making MBTA buses (Massachusetts Bay Transportation Authority) accessible to everyone in the Greater Boston region.

The academic literature on transit, wayfinding, computer vision, crowdsourcing, and navigation for the visually impaired provided a broad foundation. But the sharpest insights came directly from users with visual impairments. One-on-one interviews helped us comprehend their everyday experiences with public transportation and strategies for navigating the world while using a cane and helped us clarify assumptions, identify new opportunities, and fine-tune usability and accessibility.

We learned how important micro-navigation is, using common street features like driveways, steps, fire hydrants, landscaping, and curb cuts to orient to the environment. This led to competing models to describe a bus stop by the physical landmarks surrounding it. Each time we hit the streets to test our prototypes with our blind collaborators from Perkins school, we refined how the sequence of clues help to precisely locate the bus stop. We also developed a parallel experience for volunteer tipsters to add these clues in an intuitive and engaging way.

Meanwhile, the engineering team integrated transportation agency data for bus stop locations and real-time arrivals. To meet our users’ accessibility needs, we optimized for Dynamic Text scaling and fine-tuned raw data for naturalistic phrasing when reading aloud with VoiceOver.

After seven months of research, testing, and development, BlindWays hit the AppStore in September 2016. Many community influencers and news sources, including the Boston Globe, spoke up about the impact of BlindWays and its effect on the community.

Mobile and Emerging Technology

iOS